* refactor: unify providers and models tables

- Rename `llm_providers` → `providers`, `llm_provider_oauth_tokens` → `provider_oauth_tokens`

- Remove `tts_providers` and `tts_models` tables; speech models now live in the unified `models` table with `type = 'speech'`

- Replace top-level `api_key`/`base_url` columns with a JSONB `config` field on `providers`

- Rename `llm_provider_id` → `provider_id` across all references

- Add `edge-speech` client type and `conf/providers/edge.yaml` default provider

- Create new read-only speech endpoints (`/speech-providers`, `/speech-models`) backed by filtered views of the unified tables

- Remove old TTS CRUD handlers; simplify speech page to read-only + test

- Update registry loader to skip malformed YAML files instead of failing entirely

- Fix YAML quoting for model names containing colons in openrouter.yaml

- Regenerate sqlc, swagger, and TypeScript SDK

* fix: exclude speech providers from providers list endpoint

ListProviders now filters out client_type matching '%-speech' so Edge

and future speech providers no longer appear on the Providers page.

ListSpeechProviders uses the same pattern match instead of hard-coding

'edge-speech'.

* fix: use explicit client_type list instead of LIKE pattern

Replace '%-speech' pattern with explicit IN ('edge-speech') for both

ListProviders (exclusion) and ListSpeechProviders (inclusion). New

speech client types must be added to both queries.

* fix: use EXECUTE for dynamic SQL in migrations referencing old schema

PL/pgSQL pre-validates column/table references in static SQL statements

inside DO blocks before evaluating IF/RETURN guards. This caused

migrations 0010-0061 to fail on fresh databases where the canonical

schema uses `providers`/`provider_id` instead of `llm_providers`/

`llm_provider_id`.

Wrap all SQL that references potentially non-existent old schema objects

(llm_providers, llm_provider_id, tts_providers, tts_models, etc.) in

EXECUTE strings so they are only parsed at runtime when actually reached.

* fix: revert canonical schema to use llm_providers for migration compatibility

The CI migrations workflow (up → down → up) failed because 0061 down

renames `providers` back to `llm_providers`, but 0001 down only dropped

`providers` — leaving `llm_providers` as a remnant. On the second

migrate up, 0010 found the stale `llm_providers` and tried to reference

`models.llm_provider_id` which no longer existed.

Revert 0001 canonical schema to use original names (llm_providers,

tts_providers, tts_models) so incremental migrations work naturally and

0061 handles the final rename. Remove EXECUTE wrappers and unnecessary

guards from migrations that now always operate on llm_providers.

* fix: icons

* fix: sync canonical schema with 0061 migration to fix sqlc column mismatch

0001_init.up.sql still used old names (llm_providers, llm_provider_id)

and included dropped tts_providers/tts_models tables. sqlc could not

parse the PL/pgSQL EXECUTE in migration 0061, so generated code retained

stale columns (input_modalities, supports_reasoning) causing runtime

"column does not exist" errors when adding models.

- Update 0001_init.up.sql to current schema (providers, provider_id,

no tts tables, add provider_oauth_tokens)

- Use ALTER TABLE IF EXISTS in 0010/0041/0042 for backward compat

- Regenerate sqlc

* fix: guard all legacy migrations against fresh schema for CI compat

On fresh databases, 0001_init.up.sql creates providers/provider_id

(not llm_providers/llm_provider_id). Migrations 0013, 0041, 0046, 0047

referenced the old names without guards, causing CI migration failures.

- 0013: check llm_provider_id column exists before adding old constraint

- 0041: check llm_providers table exists before backfill/constraint DDL

- 0046: wrap CREATE TABLE in DO block with llm_providers existence check

- 0047: use ALTER TABLE IF EXISTS + DO block guard

Memoh

Self hosted, always-on AI agent platform run in containers.

📌 Introduction to Memoh - The Case for an Always-On, Containerized Home Agent

Memoh is an always-on, containerized AI agent system. Create multiple AI bots, each running in its own isolated container with persistent memory, and interact with them across Telegram, Discord, Lark (Feishu), QQ, Matrix, WeCom, WeChat, Email, or the built-in Web UI. Bots can execute commands, edit files, browse the web, call external tools via MCP, and remember everything — like giving each bot its own computer and brain.

Quick Start

One-click install (requires Docker):

curl -fsSL https://memoh.sh | sudo sh

Silent install with all defaults: curl -fsSL ... | sudo sh -s -- -y

Or manually:

git clone --depth 1 https://github.com/memohai/Memoh.git

cd Memoh

cp conf/app.docker.toml config.toml

# Edit config.toml

sudo docker compose up -d

Install a specific version:

curl -fsSL https://memoh.sh | sudo MEMOH_VERSION=v0.6.0 shUse CN mirror for slow image pulls:

curl -fsSL https://memoh.sh | sudo USE_CN_MIRROR=true shOn macOS or if your user is in the

dockergroup,sudois not required.

Visit http://localhost:8082 after startup. Default login: admin / admin123

See DEPLOYMENT.md for custom configuration and production setup.

Why Memoh?

Memoh is built for always-on continuity — an AI that stays online, and a memory that stays yours.

- Lightweight & Fast: Built with Go as home/studio infrastructure, runs efficiently on edge devices.

- Containerized by default: Each bot gets an isolated container with its own filesystem, network, and tools.

- Hybrid split: Cloud inference for frontier model capability, local-first memory and indexing for privacy.

- Multi-user first: Explicit sharing and privacy boundaries across users and bots.

- Full graphical configuration: Configure bots, channels, MCP, skills, and all settings through a modern web UI — no coding required.

Features

Core

- 🤖 Multi-Bot & Multi-User: Create multiple bots that chat privately, in groups, or with each other. Bots distinguish individual users in group chats, remember each person's context, and support cross-platform identity binding.

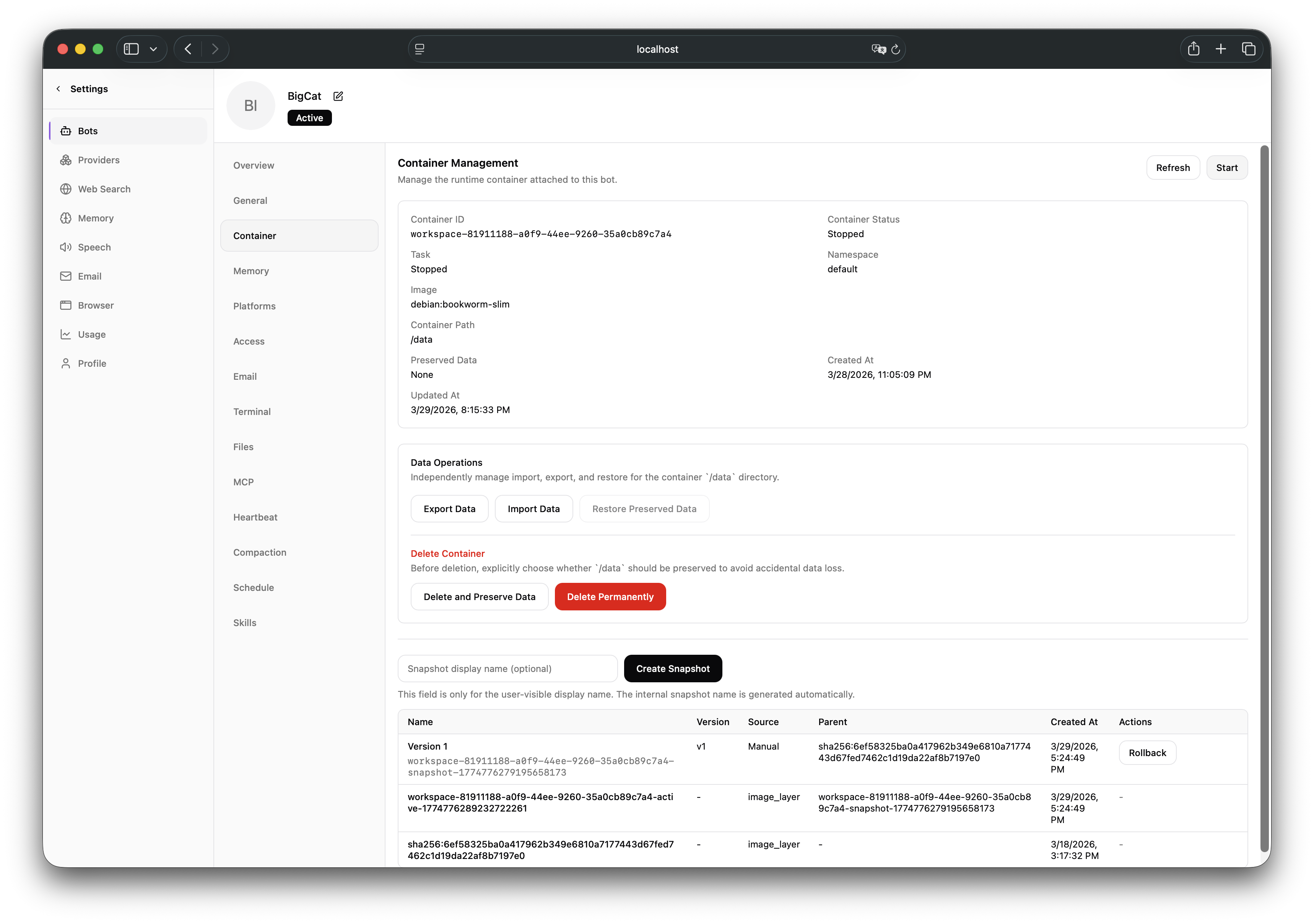

- 📦 Containerized: Each bot runs in its own isolated containerd container with a dedicated filesystem and network — like having its own computer. Supports snapshots, data export/import, and versioning.

- 🧠 Memory Engineering: LLM-driven fact extraction, hybrid retrieval (dense + sparse + BM25), 24-hour context loading, memory compaction & rebuild. Pluggable backends: Built-in (off / sparse / dense), Mem0, OpenViking.

- 💬 9 Channels: Telegram, Discord, Lark (Feishu), QQ, Matrix, WeCom, WeChat, Email (Mailgun / SMTP / Gmail OAuth), and built-in Web UI — with unified streaming, rich text, and attachments.

Agent Capabilities

- 🔧 MCP (Model Context Protocol): Full MCP support (HTTP / SSE / Stdio / OAuth). Connect external tool servers for extensibility; each bot manages its own independent MCP connections.

- 🌐 Browser Automation: Headless Chromium/Firefox via Playwright — navigate, click, fill forms, screenshot, read accessibility trees, manage tabs.

- 🎭 Skills & Subagents: Define bot personality via modular skill files; delegate complex tasks to sub-agents with independent context.

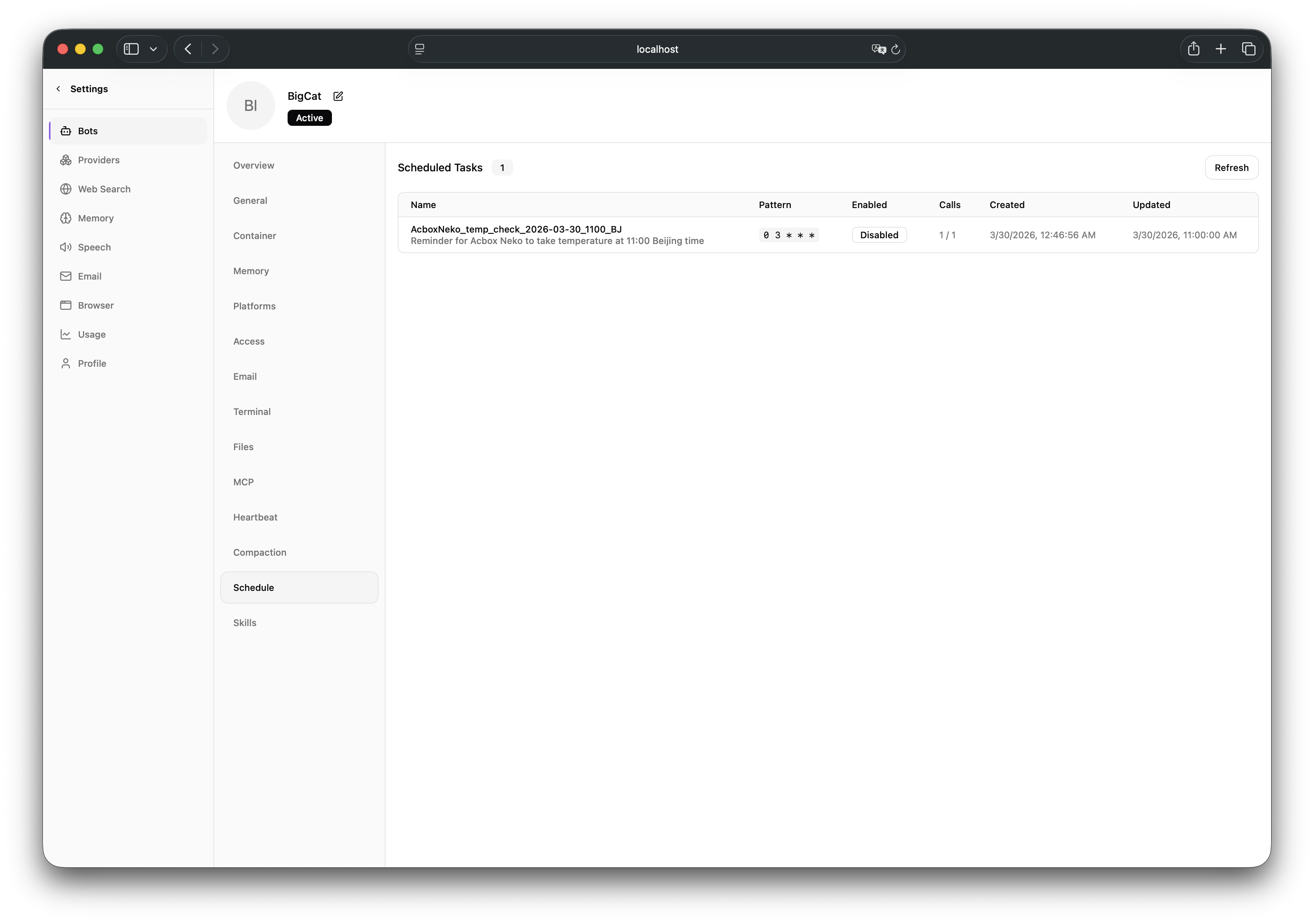

- ⏰ Automation: Cron-based scheduled tasks and periodic heartbeat for autonomous bot activity.

Management

- 🖥️ Web UI: Modern dashboard (Vue 3 + Tailwind CSS) — streaming chat, tool call visualization, file manager, visual configuration for all settings. Dark/light theme, i18n.

- 🔐 Access Control: Priority-based ACL rules with allow/deny effects, scoped by channel identity, channel type, or conversation.

- 🧪 Multi-Model: Any OpenAI-compatible, Anthropic, or Google provider. Per-bot model assignment, provider OAuth, and automatic model import.

- 🚀 One-Click Deploy: Docker Compose with automatic migration, containerd setup, and CNI networking.

Memory System

Memoh's memory system is built around Memory Providers — pluggable backends that control how a bot stores, retrieves, and manages long-term memory.

| Provider | Description |

|---|---|

| Built-in | Self-hosted, ships with Memoh. Three modes: Off (file-based, no vector search), Sparse (neural sparse vectors via local model, no API cost), Dense (embedding-based semantic search via Qdrant). |

| Mem0 | SaaS memory via the Mem0 API. |

| OpenViking | Self-hosted or SaaS memory with its own API. |

Each bot binds one provider. During chat, the bot automatically extracts key facts from every conversation turn and stores them as structured memories. On each new message, the most relevant memories are retrieved via hybrid search and injected into the bot's context — giving it personalized, long-term recall across conversations.

Additional capabilities include memory compaction (merge redundant entries), rebuild, manual creation/editing, and vector manifold visualization (Top-K distribution & CDF curves). See the documentation for setup details.

Gallery

|

|

|

| Chat | Container | Providers |

|

|

|

| File Manager | Scheduled Tasks | Token Usage |

Architecture

flowchart TB

subgraph Clients [" Clients "]

direction LR

CH["Channels<br/>Telegram · Discord · Feishu · QQ<br/>Matrix · WeCom · WeChat · Email"]

WEB["Web UI (Vue 3 :8082)"]

end

CH & WEB --> API

subgraph Server [" Server · Go :8080 "]

API["REST API & Channel Adapters"]

subgraph Agent [" In-process AI Agent "]

TWILIGHT["Twilight AI SDK<br/>OpenAI · Anthropic · Google"]

CONV["Conversation Flow<br/>Streaming · Sential · Loop Detection"]

end

subgraph ToolProviders [" Tool Providers "]

direction LR

T_CORE["Memory · Web Search<br/>Schedule · Contacts · Inbox"]

T_EXT["Container · Email · Browser<br/>Subagent · Skill · TTS<br/>MCP Federation"]

end

API --> Agent --> ToolProviders

end

PG[("PostgreSQL")]

QD[("Qdrant")]

BROWSER["Browser Gateway<br/>(Playwright :8083)"]

subgraph Workspace [" Workspace Containers · containerd "]

direction LR

BA["Bot A"] ~~~ BB["Bot B"] ~~~ BC["Bot C"]

end

Server --- PG

Server --- QD

ToolProviders -.-> BROWSER

ToolProviders -- "gRPC Bridge over UDS" --> Workspace

Sub-projects Born for This Project

- Twilight AI — A lightweight, idiomatic AI SDK for Go — inspired by Vercel AI SDK. Provider-agnostic (OpenAI, Anthropic, Google), with first-class streaming, tool calling, MCP support, and embeddings.

Roadmap

Please refer to the Roadmap for more details.

Development

Refer to CONTRIBUTING.md for development setup.

Star History

Contributors

LICENSE: AGPLv3

Copyright (C) 2026 Memoh. All rights reserved.